Adaptive deep reinforcement learning-based control strategy for high-performance permanent magnet synchronous motor drive systems

DOI:

https://doi.org/10.20998/2074-272X.2026.3.07Keywords:

deep reinforcement learning, permanent magnet synchronous motor, deep deterministic policy gradient, twin delayed deep deterministic policy gradient, adaptive motor control, actor-critic algorithmAbstract

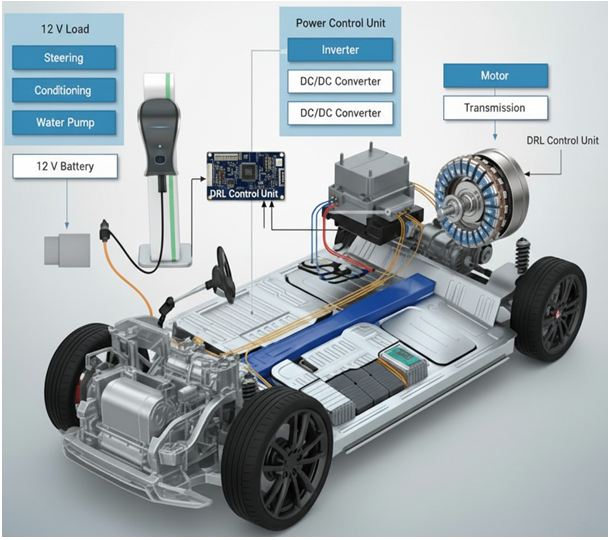

Introduction. In recent days, electric vehicles, robotics and in many control system applications, permanent magnet synchronous motors (PMSMs) are widely utilized. Problem. Due to non-linear behavior of system, external interferences and frequent changes in parameters, conventional control techniques like direct torque control, field-oriented control and PI control, frequently experience decline in performance. Goal. This paper presents a new deep learning based reinforcement learning (RL) PMSM control approach that makes use of the twin delayed deep deterministic policy gradient (TD3) and deep deterministic policy gradient (DDPG) algorithms. These algorithms utilize actor-critic architectures to learn optimal control policies in a model-free manner, enabling adaptive and intelligent motor control. Methodology. A MATLAB/Simulink-based simulation framework is developed to train and evaluate the proposed deep reinforcement learning (DRL) based controllers against conventional PI controllers. Performance metrics, including speed tracking accuracy, torque ripple minimization are analyzed. Results. The results demonstrate that DRL-based controllers exhibit superior adaptability, robustness, and dynamic performance under varying load and speed conditions in contrast to traditional control methods. Notably, the comparative analysis reveals that the TD3 algorithm outperforms DDPG by mitigating overestimation bias, resulting in smoother torque output and more stable control actions. Scientific novelty. This paper illustrates the capability of DRL for advanced PMSM control. Practical value. Paving the way for real-time implementation in modern electric drive systems. References 25, tables 3, figures 12.

References

Lenz I., Knepper R., Saxena A. DeepMPC: Learning Deep Latent Features for Model Predictive Control. Robotics: Science and Systems XI, 2015, vol. 11. doi: https://doi.org/10.15607/RSS.2015.XI.012.

Zine H.K.E., Abed K. Smart current control of the wind energy conversion system based permanent magnet synchronous generator using predictive and hysteresis model. Electrical Engineering & Electromechanics, 2024, no. 2, pp. 40-47. doi: https://doi.org/10.20998/2074-272X.2024.2.06.

Pesce E., Montana G. Learning multi-agent coordination through connectivity-driven communication. Machine Learning, 2023, vol. 112, no. 2, pp. 483-514. doi: https://doi.org/10.1007/s10994-022-06286-6.

Yin F., Yuan X., Ma Z., Xu X. Vector Control of PMSM Using TD3 Reinforcement Learning Algorithm. Algorithms, 2023, vol. 16, no. 9, art. no. 404. doi: https://doi.org/10.3390/a16090404.

Jakobeit D., Schenke M., Wallscheid O. Meta-Reinforcement-Learning-Based Current Control of Permanent Magnet Synchronous Motor Drives for a Wide Range of Power Classes. IEEE Transactions on Power Electronics, 2023, vol. 38, no. 7, pp. 8062-8074. doi: https://doi.org/10.1109/TPEL.2023.3256424.

Mimouni A., Laribi S., Sebaa M., Allaoui T., Bengharbi A.A. Fault diagnosis of power converters in a grid connected photovoltaic system using artificial neural networks. Electrical Engineering & Electromechanics, 2023, no. 1, pp. 25-30. doi: https://doi.org/10.20998/2074-272X.2023.1.04.

Yuan X., Wang Y., Zhang R., Gao Q., Zhou Z., Zhou R., Yin F. Reinforcement Learning Control of Hydraulic Servo System Based on TD3 Algorithm. Machines, 2022, vol. 10, no. 12, art. no. 1244. doi: https://doi.org/10.3390/machines10121244.

Tran C.D., Kuchar M., Nguyen P.D. Research for an enhanced fault-tolerant solution against the current sensor fault types in induction motor drives. Electrical Engineering & Electromechanics, 2024, no. 6, pp. 27-32. doi: https://doi.org/10.20998/2074-272X.2024.6.04.

Thrun S. Monte Carlo POMDPs. Advances in Neural Information Processing Systems, 1999, vol. 12, pp. 1064-1070. Available at: https://papers.nips.cc/paper/1772-monte-carlo-pomdps.

Silver D., Lever G., Heess N., Degris T., Wierstra D., Riedmiller M. Deterministic policy gradient algorithms. Proceedings of the 31st International Conference on Machine Learning, 2014, pp. 387-395. Available at: http://proceedings.mlr.press/v32/silver14.html.

Fujimoto S., Hoof H., Meger D. Addressing function approximation error in actor-critic methods. Proceedings of the 35th International Conference on Machine Learning, 2018, pp. 1587-1596. Available at: http://proceedings.mlr.press/v80/fujimoto18a.html.

Li Q., Lin T., Yu Q., Du H., Li J., Fu X. Review of Deep Reinforcement Learning and Its Application in Modern Renewable Power System Control. Energies, 2023, vol. 16, no. 10, art. no. 4143. doi: https://doi.org/10.3390/en16104143.

Mastanaiah A., Ramesh T. Enhanced Direct Torque Control of Sensorless PMSM Drive with TD3 Agent-Based Speed Controller. 2024 IEEE 4th International Conference on Sustainable Energy and Future Electric Transportation (SEFET), 2024, pp. 1-6. doi: https://doi.org/10.1109/SEFET61574.2024.10718017.

Mastanaiah A., Ramesh T. Experimental Implementation of a TD3 Agent Based Speed Controller for Direct Torque Control of PMSM Drives. IETE Journal of Research, 2025, vol. 71, no. 1, pp. 235-246. doi: https://doi.org/10.1080/03772063.2024.2395457.

Hu Z., Zhang Y., Li M., Liao Y. Speed Optimization Control of a Permanent Magnet Synchronous Motor Based on TD3. Energies, 2025, vol. 18, no. 4, art. no. 901. doi: https://doi.org/10.3390/en18040901.

Muthurajan S., Loganathan R., Hemamalini R.R. Deep Reinforcement Learning Algorithm based PMSM Motor Control for Energy Management of Hybrid Electric Vehicles. WSEAS Transactions on Power Systems, 2023, vol. 18, pp. 18-25. doi: https://doi.org/10.37394/232016.2023.18.3.

Vikas, Yadav P., Singh B., Kumar R. Model Free Reinforcement Learning based Control of Permanent Magnet Synchronous Motor Drive. 2023 International Conference on Computer, Electronics & Electrical Engineering & Their Applications (IC2E3), 2023, pp. 1-6. doi: https://doi.org/10.1109/IC2E357697.2023.10262459.

Zhaona L., Chuanxing W., Junlong W., Yan W. Adaptive Control of Multimodal Permanent Magnet Synchronous Motor Based on Embedded Neural Network. International Journal of High Speed Electronics and Systems. 2024. doi: https://doi.org/10.1142/S0129156425401226.

Schindler T., Broghammer L., Karamanakos P., Dietz A., Kennel R. Deep Reinforcement Learning Current Control of Permanent Magnet Synchronous Machines. 2023 IEEE International Electric Machines & Drives Conference (IEMDC), 2023, pp. 1-7. doi: https://doi.org/10.1109/IEMDC55163.2023.10238988.

Hassan A.M., Ababneh J., Attar H., Shamseldin T., Abdelbaset A., Metwally M.E. Reinforcement learning algorithm for improving speed response of a five-phase permanent magnet synchronous motor based model predictive control. PLOS ONE, 2025, vol. 20, no. 1, art. no. e0316326. doi: https://doi.org/10.1371/journal.pone.0316326.

Huang W., Huang Y., Xu D. Model-Free Predictive Current Control of Five-Phase PMSM Drives. Electronics, 2023, vol. 12, no. 23, art. no. 4848. doi: https://doi.org/10.3390/electronics12234848.

Wu J., Wu Q.M.J., Chen S., Pourpanah F., Huang D. A-TD3: An Adaptive Asynchronous Twin Delayed Deep Deterministic for Continuous Action Spaces. IEEE Access, 2022, vol. 10, pp. 128077-128089. doi: https://doi.org/10.1109/ACCESS.2022.3226446.

François-Lavet V., Henderson P., Islam R., Bellemare M.G., Joelle P. An Introduction to Deep Reinforcement Learning. Foundations and Trends in Machine Learning, 2018, vol. 11, no. 3-4, pp. 219-354. doi: https://doi.org/10.1561/2200000071.

Hambly B., Xu R., Yang H. Recent advances in reinforcement learning in finance. Mathematical Finance, 2023, vol. 33, no. 3, pp. 437-503. doi: https://doi.org/10.1111/mafi.12382.

Pickard R., Lawryshyn Y. Deep Reinforcement Learning for Dynamic Stock Option Hedging: A Review. Mathematics, 2023, vol. 11, no. 24, art. no. 4943. doi: https://doi.org/10.3390/math11244943.

Downloads

Published

How to Cite

Issue

Section

License

Copyright (c) 2026 S. Dukkipati, S. S. Nagendra, E. Parimalasundar, B. H. Kumar

This work is licensed under a Creative Commons Attribution-NonCommercial 4.0 International License.

Authors who publish with this journal agree to the following terms:

1. Authors retain copyright and grant the journal right of first publication with the work simultaneously licensed under a Creative Commons Attribution License that allows others to share the work with an acknowledgement of the work's authorship and initial publication in this journal.

2. Authors are able to enter into separate, additional contractual arrangements for the non-exclusive distribution of the journal's published version of the work (e.g., post it to an institutional repository or publish it in a book), with an acknowledgement of its initial publication in this journal.

3. Authors are permitted and encouraged to post their work online (e.g., in institutional repositories or on their website) prior to and during the submission process, as it can lead to productive exchanges, as well as earlier and greater citation of published work.